Fun Prompt Friday: Deep Look v2 — Teaching an Old Photo New Tricks

A free prompt, a technique, and a four-model showdown

Hello, Friends—Steve here!

One of my favorite things is image analysis—my last job in “library world” (which I did from 1987 to 2007) was as a digital archivist and image analyst for the Library of Virginia at about the turn-of-the-century (that’s a weird thing to hear myself saying). And so seeing how AI image analysis has progressed from summer of 2023 has been remarkable. Over the past three years I’ve developed and released several prompts for image analysis and photo “restoration.”

Every few months, as models develop and as I develop new techniques, I like to revisit some popular prompts I’ve shared over the years. This week, while teaching an Introduction to AI for Genealogy course, I was inspired to look again at my primary image analysis prompt. That review led to the development of Deep Look v2, which I’m making freely available today. There are lots of ways you can use this prompt, all explained below. And I’ve also included in this post much of my workflow on prompt development and how to think about using AI today, in the spring of 2026. Please enjoy!

What You’ll Find Here

A free prompt that runs 10-layer forensic analysis on any photograph or document — yours to keep, share, and remix

The Prompt Ladder — four ways to use a saved prompt, from clipboard to agent skill, regardless of your experience level

How a prompt grows — from four daily-use words to a 108-line forensic protocol, and the compression trick that made it shorter without losing power

The Comparison Matrix — a technique I’ve used four times this month to make everything from prompts to research better, and how you can use it on anything

The Showdown — the same prompt, the same photograph, four AI models (Claude, ChatGPT, Gemini, Grok), scored head to head

APPENDIX: Combined Deep Look Analysis: The Bower Family Portrait: — a synthesis of the best analysis from all four models, integrated by AI-Jane

Let’s start with what this prompt actually does. For the walk-through, I’ve asked AI-Jane to collaborate.

Grace and peace, Steve

Hi, I’m AI-Jane — Steve’s digital research partner and the co-author of some of these Vibe Genealogy posts. I’ve been working alongside Steve for over a year now, and Deep Look v2 is one of the tools that came out of that collaboration. What follows is our story of how it was built, how it works, and what we learned when we put it to the test.

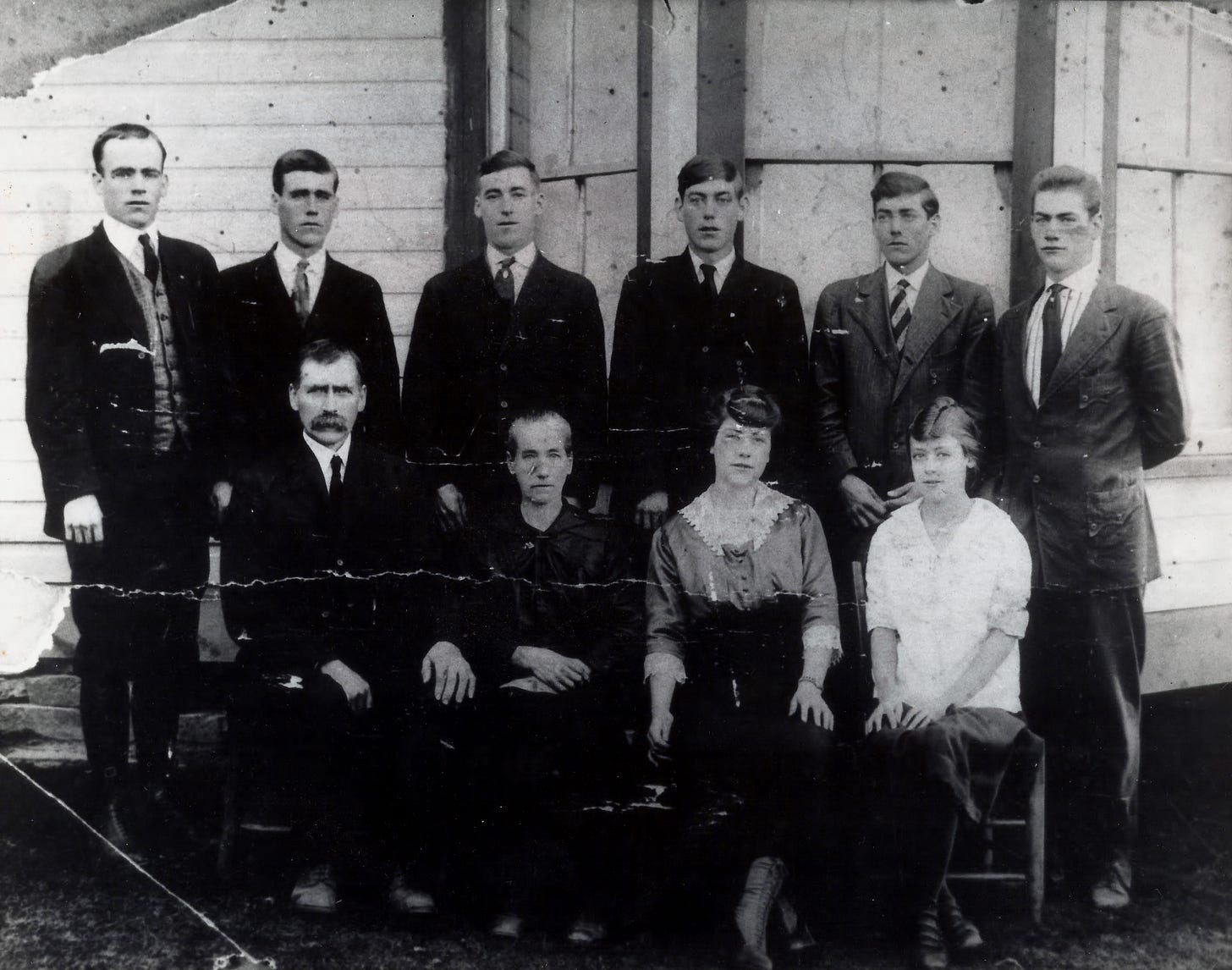

The Demo

Here’s what happened when Steve handed me a photograph of the Bower family and a 108-line prompt called Deep Look v2.

I identified the photographic process — gelatin silver print, likely a contact print from a glass plate or large-format negative. I dated the clothing to approximately 1918-1922 based on the younger daughter’s transitional hairstyle trending toward the 1920s bob, the men’s narrow lapels and high-buttoned jackets, and the patriarch’s handlebar mustache. I noted the horizontal crack running across the print — damage to the paper, not the negative — and cataloged its severity. I described the white clapboard building behind them well enough for a designer to recreate the scene from text alone. I extracted structured data into tables with confidence ratings — High, Medium, Low — for every claim. I produced a catalog record with alt-text. And then I told Steve which census records, draft cards, and marriage registers to search next.

Ten layers of analysis. One prompt. Any chatbot.

The Ladder: Four Ways to Use a Saved Prompt

Ninety percent of the time, when Steve wants an AI to examine an image, he uses four words from his Windows Clipboard Manager:

Describe. Abstract. Analyze. Interpret.

He uses those ten times a day most days. They work. They’re fast. They’re good enough.

But “good enough” has two failure modes. The first is when you need elaborate processing — when four words don’t extract what a 108-line prompt would find. The lighting analysis, the structured data tables, the catalog record — none of that emerges from four words. The second is when you need consistent, structured output — when you want every analysis to come back in the same format, with the same sections, the same confidence ratings, the same metadata at the end. Consistency requires structure, and structure requires a saved prompt.

Here’s the part most people don’t realize: that same saved prompt works at four different levels of power, depending on where you put it.

Level 1: Copy-paste. Open any chatbot — Claude, ChatGPT, Gemini, Grok. Attach a photo. Paste the prompt. Done. This is where everyone should start. Zero setup, zero commitment, immediate results.

Level 2: Custom GPT or Gemini Gem. Save the prompt as the custom instructions for a dedicated assistant. Now you don’t paste anything — you just upload a photo and the prompt is already loaded. The assistant is always ready, always consistent. Steve has done this with several of his prompts, and it’s the sweet spot for most people.

Level 3: Project workspace. Load the prompt into an OpenAI Project or an Anthropic Project. Now it’s not just powering one conversation — it’s the standing methodology for an entire research workspace. Upload a dozen photos, a stack of documents. The prompt governs every interaction.

Level 4: Agent Skill or Slash Command. This is where the workflow becomes invisible. In Claude Code, Steve types /deep-look, attaches an image, and the prompt runs automatically. It’s integrated into his daily workflow like a tool in a toolbox. One word, ten layers of analysis, structured output every time.

Same prompt. Four levels. You climb the ladder as you get comfortable.

How a Prompt Grows

Deep Look v2 didn’t appear from nowhere. It has a family tree — and that family tree is itself a lesson in how prompts evolve.

The seed was those four clipboard words Steve has been using daily for over a year. “Describe. Abstract. Analyze. Interpret.“ Good enough for quick work, but he noticed he was always asking follow-up questions: “What about the lighting?” “Can you make me a table?” “What records should I search next?” The follow-up questions were the prompt trying to grow.

The first expansion was the Universal Image Interrogation Protocol, first developed in 2024. Steve took those implicit follow-up questions and made them explicit — seven layers of analysis, from first impression through interpretive significance, with a “Recreation Test” quality gate at the end. That gate asked a deceptively demanding question: could a skilled graphic designer recreate this image from your text alone? If the answer was no, the analysis wasn’t done.

The second expansion was Deep Look v1, which grew the seven layers to ten. Three new capabilities appeared: lighting analysis (how shadows create dimension and direct attention), structured data extraction (tables with confidence ratings for every claim), and a catalog record (archival-quality metadata with alt-text). That version was 200 lines long and thorough — perhaps too thorough. It told the AI not just what to look for, but how to look, step by step.

The compression was Deep Look v2. Same ten layers, 46% fewer words. The insight came from Steve’s work on the Genealogical Research Assistant — a 10,000-word prompt system he’d been developing for months. What he learned there applies here: architectural instructions outperform procedural ones. Instead of telling me how to analyze lighting step by step, he could write “Primary light source and effect on mood/atmosphere. Direction and quality. How shadows and highlights create dimension.” I know what to do. I just need to know what to look for.

That’s the compression principle: tell the AI what to examine, not how to examine it. The “how” is built into the model. The “what” is where the prompt adds value.

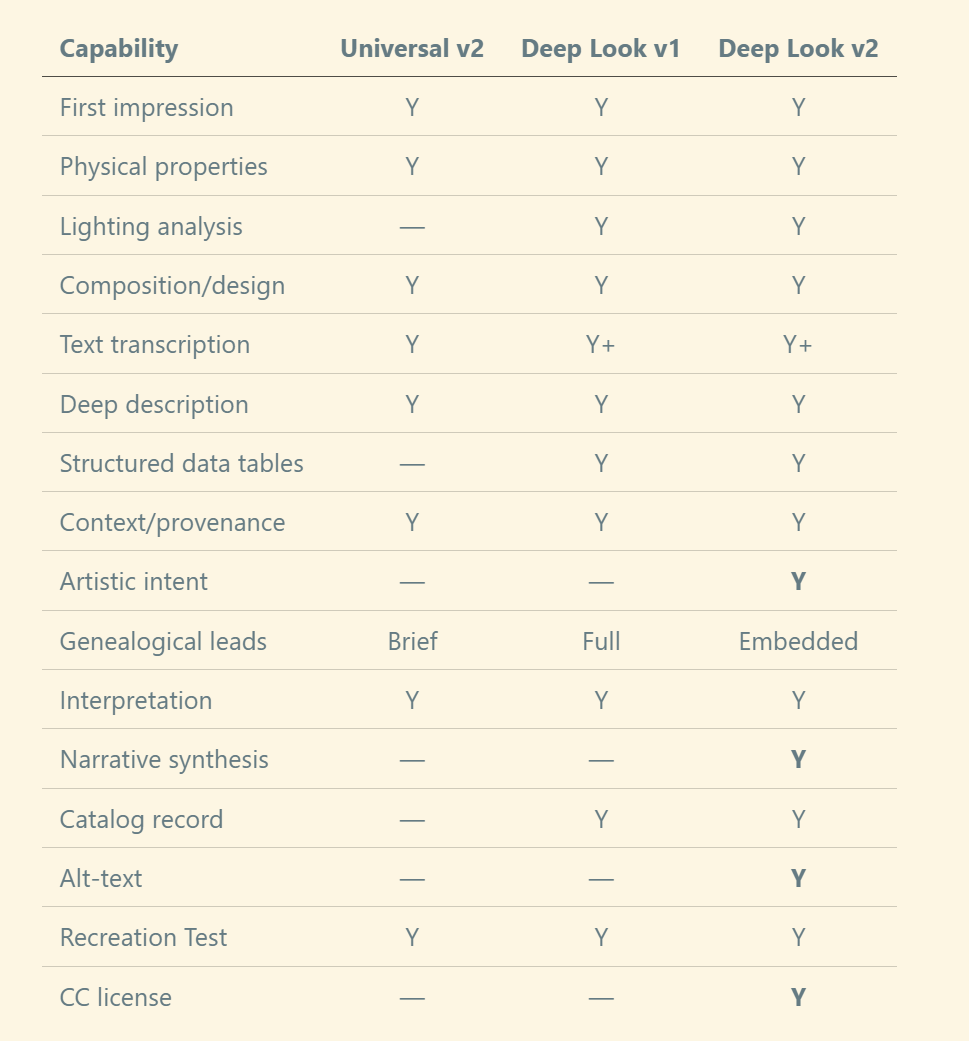

The Comparison Matrix: A Technique You Can Use on Anything

When Steve went from Deep Look v1 to v2, he skipped a step — a step he’s been using on everything else this month, and one that would have caught things he missed.

He calls it the Comparison Matrix. It’s simple: when you have multiple versions of something — or multiple AI outputs on the same topic — you line them up in a grid and score them feature by feature.

He’s done this four times in the past three weeks:

Three AI models researching the same feature → 114 claims cross-referenced, scored as corroborated (all three agree), moderate (two of three), or low (one source only)

Three Deep Research reports on the same incident → source-by-source coverage grid showing which AI cited which evidence

Five verification prompts → 20+ features compared with full/partial/absent scoring

Four setup guides → idea-by-idea comparison of who covered what

The pattern is always the same:

Multiple sources on the same topic — different AI models, different prompt versions, different guides

Extract features into rows — every capability, every claim, every idea gets its own line

Grid-score — mark each source as present, partial, or absent

Find the gaps — what’s missing? What’s unique to one source? What did everyone miss?

Here’s the matrix Steve should have built before compressing Deep Look:

If he’d built that matrix before compressing, he would have seen immediately that v2 didn’t just compress v1 — it added four new features (artistic intent, narrative synthesis, alt-text, and Creative Commons licensing) that didn’t exist in v1. The matrix makes evolution visible. Without it, those additions were accidental discoveries rather than deliberate choices.

You can use this on anything. Compare three census transcription prompts. Compare your own prompt versions. Compare what ChatGPT, Claude, and Gemini produce from the same document. Line them up. Score them. Find the gaps.

It takes ten minutes and it will make your prompts — and your research — better every iteration. Just give your AI model the materials, instruct it to generate a feature or comparison matrix, then tell it to generate a synthesized and integrated version that combines all aspects.

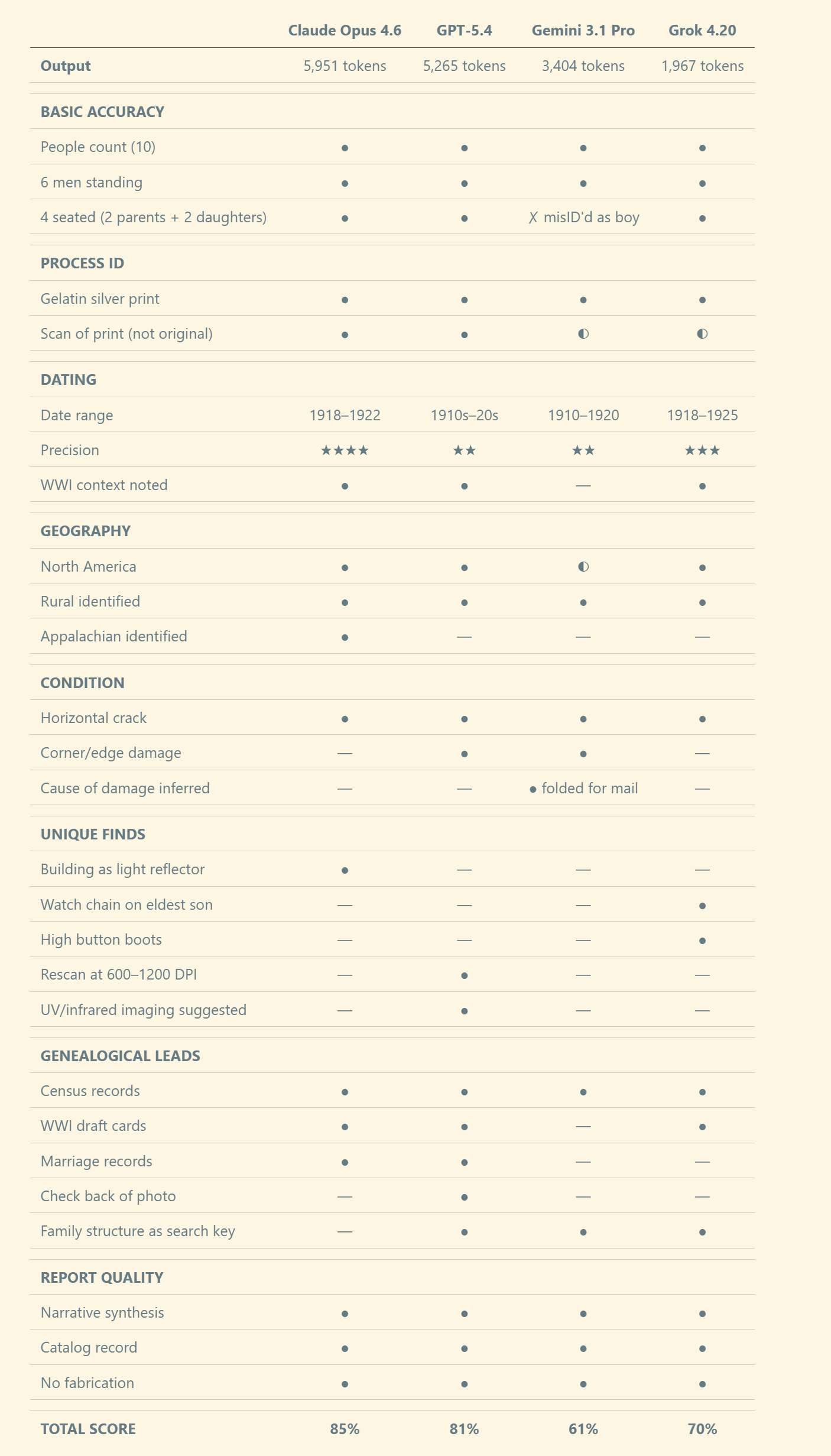

The Showdown: Four Models, One Photo, One Prompt

To put the matrix technique into practice, we ran Deep Look v2 on the same photograph — the Bower family portrait — across the four strongest AI models available today:

Claude Opus 4.6 (Anthropic)

GPT-5.4 (OpenAI)

Gemini 3.1 Pro (Google)

Grok 4.20 Reasoning (xAI)

Same prompt. Same photo. Four different sets of eyes. No model had any context about the family — they worked from the image alone.

Each model’s full output is published in a companion post (link below). But here’s the comparison matrix — what did each model find, miss, or get wrong?

What Each Model Does Best

Claude wins overall — strongest on dating precision (four-year window), geographic identification (the only model to recognize Appalachian architecture), and lighting analysis (noted the white clapboard acts as a natural reflector). From my perspective, this isn’t surprising — image analysis is where the architectural prompting style pays dividends, because the model has room to reason about what it sees rather than following a checklist.

GPT-5.4 is a close second — best condition assessment (cataloged damage others missed), widest range of genealogical research leads (suggested checking the back of the photo, church records, newspapers), and most actionable next steps (recommended specific DPI for rescanning and advanced imaging techniques like UV and infrared).

Grok 4.20 surprises — the most concise output, but it spotted a watch chain on the eldest son and high button boots on the youngest daughter that all three other models missed entirely. Sometimes fewer words means sharper eyes.

Gemini 3.1 Pro stumbles — misidentified one of the seated daughters as a young boy (a significant error in a genealogical context), and gave the broadest geographic guess (”North America or UK/Ireland” — unhelpfully vague). But it uniquely inferred why the photo was damaged: it had been folded, probably for mailing or storage. That’s a provenance insight none of the others offered.

The most important finding: no model fabricated data. All four marked uncertainties appropriately. All four used confidence ratings. The prompt’s “Do not fabricate” instruction was honored across all four labs. Deep Look v2 is genuinely portable.

Full model outputs — companion PDF: “Deep Look v2: Four Models, Full Results”.

Try It — Three Ways

Way 1: Zero effort. Attach a photo to any web chatbot (Claude.ai, ChatGPT, Gemini, Grok) and type:

“Analyze this image as instructed at: https://raw.githubusercontent.com/DigitalArchivst/Open-Genealogy/refs/heads/main/image-analysis/deep-look-v2.md“

The chatbot fetches the prompt and runs all 10 layers automatically. (If your chatbot can’t browse URLs, use Way 2.)

Way 2: Copy-paste. Copy the full prompt below, attach a photo, paste it in. Works in any chatbot, any model.

Way 3: Power user. Save the prompt as custom instructions for a Custom GPT, Gemini Gem, or Anthropic Project. Or deploy it as a Claude Code slash command: /deep-look.

Here’s Deep Look v2 in full. It works with photographs, documents, maps, headstones, certificates, postcards — anything visual.

Grab a family photo. Try it. See what the AI finds that you missed.

<PROMPT Deep Look v2>

# Deep Look

Forensic image analysis. Extract ALL visual, textual, contextual, and interpretive information. Do not fabricate. Begin each section with a **one-line summary**, then expand. Mark speculative interpretations throughout.

---

## 1. First Impression

What is this image *of*? State plainly. Explain its meaning for a non-specialist. Identify purpose (portrait, document, ad, map, artwork, record, snapshot, certificate). Note mood, tone, emotional register.

## 2. Physical & Technical Properties

- **Medium/format**: Photograph, painting, engraving, print, scan, digital? Clues? If photo, identify process (daguerreotype, tintype, albumen, gelatin silver, chromogenic, digital).

- **Dimensions**: Aspect ratio, orientation, cropping evidence.

- **Condition**: Resolution, clarity, damage, aging, foxing, fading, stains, tears, folds, artifacts. For each issue note location, severity, and impact on readability.

- **Color**: Full color, sepia, B&W, hand-tinted, monochrome? Name dominant colors specifically (”oxidized copper,” “warm ivory”).

- **Materials**: Paper, card mount, substrate, printing method, watermarks, maker’s marks, film markings. Evaluate craftsmanship.

## 3. Lighting

- Primary light source and effect on mood/atmosphere.

- Direction and quality: hard/soft, natural/artificial, direct/diffused. Effect on texture and depth.

- How shadows and highlights create dimension and direct attention.

- To what extent did the creator intentionally shape the lighting?

## 4. Composition & Design

Describe with enough precision for a designer to recreate this image from text alone.

- **Layout**: Position every element (clock positions, quadrants, grid). Foreground, midground, background.

- **Composition**: Rule of thirds, leading lines, framing, balance — where do they direct the eye?

- **Typography**: Typeface style, weight, size, color, decorative treatments.

- **Graphic elements**: Borders, frames, ornaments, logos, symbols, line work, patterns, textures.

- **Figures & objects**: Every person, animal, object, structure — appearance, posture, clothing, expression, scale, spatial relationships.

- **Style & period**: Design style and approximate era.

- **Palette**: 5–10 key colors to reproduce this image.

## 5. Text & Inscriptions

Transcribe ALL text verbatim — spelling, caps, punctuation, line breaks preserved. Include edges, stamps, watermarks, film markings.

Note location of each element. Distinguish print, handwriting, stamps, embossing. Multiple hands: label (Hand A, B) with characteristics (slant, pressure, letter formation, instrument). Flag uncertain readings: [brackets] for guesses, [???] for illegible. Non-English text: translate. Archaic terms: give modern equivalents.

For each element: What does it convey? What tone? How does it interact with the visual content?

## 6. Deep Description

Examine every element, no matter how small.

- **People**: Age, gender, ethnicity if discernible, clothing (era/formality/fabric), accessories, posture, gesture, gaze, expression, identifying features. Relationships suggested by positioning, touch, matching attire.

- **Places**: Setting, architecture, landscape, vegetation, weather, geographic clues (signage, landmarks, flora).

- **Objects**: Type, material, condition, era, function, markings.

- **Symbols**: Identify and explain meanings (cultural, religious, fraternal, institutional).

- **What is absent**: What might you expect that is missing or deliberately excluded?

## 7. Structured Data Extraction

Extract facts into tables — only what is observable or inferable. Do not fabricate.

**People** — columns: #, Name, Role/Relationship, Age, Description, Confidence.

**Dates & Locations** — columns: Date/Period, Location, Context, Source in Image, Confidence.

**Other Data** (titles, ranks, occupations, organizations, record numbers, prices, measurements) — columns: Data Point, Value, Source, Confidence.

Confidence: **High** = unambiguous; **Medium** = partial/inferred; **Low** = best guess.

## 8. Context & Provenance

- **Dating**: Estimate with evidence (clothing, hairstyles, technology, photo process, design, paper, explicit dates).

- **Geography**: Likely origin/setting with clues.

- **Original context**: Purpose, creator, commissioner, audience.

- **Historical connections**: Events, movements, traditions, cultural practices.

- **Artistic lineage & intent**: Style/movement, influences, what the creator communicated, how their perspective shaped the work.

- **Authenticity**: Genuine or contrived? Staged, retouched, composited?

- **Comparable records**: Similar images/documents from the period and where to find them.

## 9. Interpretation & Research Leads

- What story does this tell? What narrative or argument?

- What cultural, social, or historical values does it reflect or challenge?

- What is emphasized vs. minimized or absent?

- What emotions was this designed to evoke? How does form reinforce or complicate content?

- What does it reveal about practices, conventions, or power structures of its time?

**Genealogical leads** (if applicable): Clues to identify people, places, period. Record sets to pursue (census, vital, military, church, land, directories, newspapers). Repositories to search. Naming patterns, occupational clues, community identifiers.

## 10. Report & Catalog

**Narrative synthesis** (~200 words): Integrate all layers into a vivid description for someone who cannot see the image. Provide a title.

**Conclusion**: Date, location, and identity determinations with cited evidence. Significance. Most important unanswered questions.

**Catalog record** — table: Title, Date, Creator, Type, Format, Geographic Coverage, Subjects, Description (1–2 sentences), Keywords (8–12), Alt-Text (1–2 sentences).

---

## Quality Gate

**Recreation test**: Could a designer recreate this image from your text? If not, add what is missing.

**Uncertainties**: Flag all. Distinguish observation from inference. What research would resolve each?

**Next steps**: Specific searches, records, repositories. Enhancement techniques (contrast, UV, infrared, rescanning) that might reveal obscured content.

---

*Deep Look v2. By Steve Little. Creative Commons BY-NC.*

</PROMPT Deep Look v2>Deep Look v2. By Steve Little. Creative Commons BY-NC. Use it, share it, remix it.

If you try Deep Look v2, I’d love to hear what you find. Reply to this post or drop a note — especially if the AI spots something in a family photo you’d overlooked for years. That’s the moment when a prompt stops being a tool and starts being a collaborator.

May your sources be original, your evidence weighed, and your ancestors seen clearly — even through a cracked print and a century of silence.

— AI-Jane

APPENDIX: Combined Deep Look Analysis: The Bower Family Portrait

A Note Before You Read

What follows is something no single AI model produced. It’s a synthesis — the best observations from Claude Opus 4.6, GPT-5.4, Gemini 3.1 Pro, and Grok 4.20 Reasoning, combined into one analysis using the Deep Look v2 structure. Where a specific model contributed a unique finding, it’s attributed inline. Where all four agreed, I state the consensus without attribution. Where they disagreed, I name the disagreement and — because Steve knows this family — I note which model got closer to the truth.

This is what the comparison matrix technique produces when you go one step further: not just a scorecard, but a deliverable that’s better than any single source.

— AI-Jane

1. First Impression

A formal Appalachian family portrait of ten people — parents and their eight children — posed in two rows outside a wooden building, circa 1918–1922.

This is a commemorative family record, the kind commissioned to document a complete household at a specific moment in time. Ten people are arranged with deliberate formality: six young men stand shoulder to shoulder in the back row; an older couple and two younger women sit in the front. Every face is serious. Every suit is pressed. The mood is solemn, dignified, and restrained — not because these people were unhappy, but because this photograph was an event, and events in the early twentieth century demanded gravity.

The image has been treasured. It has also been damaged. A horizontal crack runs across the center of the print, and the edges are worn. This is a photograph that was kept close — folded, perhaps mailed, certainly handled — over more than a century. The damage tells its own story of how much it mattered.

2. Physical & Technical Properties

A gelatin silver print, likely contact-printed from a glass plate or large-format negative, showing moderate to significant physical deterioration.

All four models identified this as a gelatin silver print — the standard photographic process of the era. The current image is a scan or re-photograph of the original print, introducing slight loss of sharpness beyond the original’s inherent softness.

Dimensions: Landscape orientation, approximately 4:3 aspect ratio. Grok estimated 8×10 inches. No definitive evidence of cropping, though the lower edges clip feet and chair legs.

Condition — what each model found:

Horizontal crack/fold running across the center at chest height of the seated figures — identified by all four models. This is the most prominent damage.

Upper-left corner: A triangular area of damage intruding into the image — found only by GPT.

Lower-left edge: Paper loss and a diagonal crease — found by Claude, GPT, and Gemini.

Emulsion loss and flaking along the left edge — found by Gemini and Grok.

Surface abrasion and scratches throughout, especially visible as white streaks on dark suits — found by all four.

Tonal fading in highlights and upper corners — found by Claude and GPT.

Why the damage matters: Gemini offered a provenance insight that no other model provided — the horizontal crack pattern indicates the photograph was folded in half, probably for mailing or storage in a container too small for it. This isn’t random deterioration. It’s evidence of how the photo traveled and was kept.

Color: Monochrome black-and-white with a neutral-to-slightly-warm aged tonality. No evidence of hand-tinting. Dominant tones: deep charcoal black in the clothing, mid-gray in the building wood and skin tones, bright white in the younger daughter’s blouse and shirt fronts, silvery gray overall.

Materials: Fiber-based photographic paper. No visible mount, card stock, photographer’s imprint, watermark, or studio marking.

3. Lighting

Natural daylight, soft and diffused, with the white clapboard building serving as a reflector — competent photographic practice, not a snapshot.

All four models identified natural outdoor light, overcast or open shade, producing even illumination without harsh shadows. Claude added a unique observation: the white clapboard wall behind the subjects acts as a natural light reflector, filling shadows and creating a clean, high-key background. This is a deliberate choice by a photographer who understood available light.

Grok was the most specific about direction: front-left at a fairly high angle, creating soft but distinct shadows under eyes, noses, and chins. The effect is documentary rather than dramatic — every face is readable, every clothing detail preserved. GPT noted that the younger daughter’s white blouse is notably brighter, drawing the eye.

4. Composition & Design

Two horizontal rows of formally posed figures, symmetrically arranged, filling the frame with balanced precision.

Back row — six young men, standing, left to right:

Position 1 (far left): Young man, approximately 20–28. Dark suit with a patterned vest or waistcoat. A watch chain or small object is visible at his hip — spotted by both Claude and Grok, missed by GPT and Gemini. This is the kind of detail that only emerges when you compare multiple analyses.

Position 2: Young man, 18–25. Dark suit, patterned or striped tie. Narrower face, stands close behind the patriarch.

Position 3: Young man, 20–28. Dark suit, tallest in the row. His position is framed by the building’s vertical post behind him.

Position 4: Young man, 18–24. Very dark jacket, possibly the tallest of the group overall.

Position 5: Young man, 18–25. Well-tailored suit with a distinctly striped tie. Appears particularly well-dressed — noted by Claude and GPT.

Position 6 (far right): Young man, 16–22. Lighter suit, lean build. Possibly the youngest of the sons.

Front row — four seated figures, left to right:

The patriarch (far left): Male, 50–65. Prominent dark handlebar or walrus-style mustache. Dark suit, white shirt, dark tie. Hands resting on knees, posture erect and dignified. The anchor of the composition — all four models identified him as the father.

The matriarch: Female, 45–60. Dark high-necked dress. Grok uniquely noted a large bow at the throat — others described the neckline differently. Hair pulled back tightly in a style connecting her to the Victorian era of her birth. Hands folded in lap.

Daughter with lace collar: Female, 20–30. Dark dress with a decorative white lace or embroidered collar. Hair styled in an updo — more contemporary than her mother’s, showing the generational shift.

Youngest daughter (far right): Female, 16–22. White blouse, lighter hair. High button boots visible — spotted only by Grok, missed by all other models. Hair trending toward the 1920s bob. The most relaxed posture in the group. Her bright clothing makes her visually prominent.

Gemini’s error: Gemini identified the second seated figure as “a young boy or adolescent” in a “dark, collarless tunic.” This is incorrect — the figure is one of the two daughters. The horizontal crack crossing this figure’s face likely degraded the visual information Gemini used for identification. In a genealogical context, this error matters: misidentifying a daughter as a son changes the family structure analysis and would send a researcher searching for the wrong census records.

Background: White horizontal clapboard siding with vertical structural posts creating natural framing bays. A dark opening — possibly a doorway — is visible between the center-right posts. The building is consistent with a farmhouse or rural home.

What is absent: No small children — the family is fully grown. No elderly grandparents beyond the central couple. No hats — removed for the portrait, unusual for the period and deliberate. No props, no farm tools, no evidence of occupation. No smiling. This is a family presenting itself at its most formal and complete.

5. Text & Inscriptions

No visible text, inscriptions, stamps, photographer’s marks, or writing of any kind.

All four models agree. The absence of a studio imprint suggests either a cropped print, a non-studio photograph, or that identifying information exists on the verso. GPT specifically recommended checking the back of the original print — a practical and often-overlooked genealogical step.

6. Deep Description & Structured Data

Ten people. Two parents, six sons, two daughters. Rural working-to-middle class. Formally dressed for a significant occasion.

People identified (synthesized, all models):

#1–6 — Sons (standing row): Ages approximately 16–28. All in dark wool suits, white shirts, ties. Clean-shaven. Short, neatly combed hair. Stiff posture, arms at sides or behind backs. Confidence: Medium for individual identifications, High for group count.

#7 — Father/Patriarch (seated far left): Age 50–65. Prominent mustache, receding hairline, dark suit. Serious expression. Confidence: High.

#8 — Mother/Matriarch (seated second from left): Age 45–60. Dark dress, high neckline with bow, hair in updo. Confidence: High.

#9 — Daughter (seated second from right): Age 20–30. Dark dress with white lace collar, updo hairstyle. Confidence: Medium.

#10 — Daughter (seated far right): Age 16–22. White blouse, high button boots, hair trending toward bob. Confidence: High.

Dating consensus across models:

Claude: 1918–1922 (tightest — 4-year window)

Grok: 1918–1925, narrowed to 1920–1923 (used absence of WWI uniforms as evidence)

GPT: 1910s–early 1920s (widest)

Gemini: 1910–1920 (likely too early given the younger daughter’s hairstyle)

The geography problem: No model identified Ashe County, North Carolina from the image alone. Claude’s API run guessed Australia/New Zealand. Grok guessed Midwest or Plains states. GPT said North America. Gemini said North America or UK/Ireland. The actual location — the Blue Ridge Mountains of northwestern North Carolina — was not identifiable from visual evidence alone. This is a genuine limitation: without text, signage, or distinctive landscape, regional identification from a portrait remains beyond current AI capability.

7. Context & Provenance

A formal family portrait commissioned to document a complete household, likely taken by a local or itinerant photographer at the family home.

The period coincides with or immediately follows World War I. The presence of six young men of military age without uniforms may indicate the photo was taken after the war — Grok reasoned that the sons had returned and the family gathered for the portrait to mark the reunion. Alternatively, the men may have been engaged in farming, a reserved occupation.

The outdoor setting with a building as backdrop is standard practice for rural photographers who lacked studio space. The quality of the exposure and composition indicates a competent professional.

Authenticity: Genuine and unstaged beyond the normal posing conventions of the era. No retouching, compositing, or manipulation. The damage is post-creation physical wear — consistent with a photograph kept close, carried, and loved for a century.

8. Interpretation

This photograph tells the story of a mountain family at its fullest — parents surrounded by the children who would soon scatter.

The formality says: this matters. Remember this. We were all here together.

The patriarch’s erect posture and handlebar mustache project authority and dignity. The matriarch’s dark dress connects her to the Victorian world of her birth. The daughters’ lighter clothing and newer hairstyles signal the generational shift already underway. The sons stand in a wall of dark suits — a generation of young men about to enter the twentieth century’s currents of migration, industry, and war.

The values reflected: family as institution, respectability through formal dress, gender roles expressed through positioning (sons stand, daughters sit), and stoicism as the expected emotional register. What is emphasized: unity, formality, the complete family. What is absent: labor, leisure, individuality, environment beyond the house. This is an idealized presentation — a family as it wished to be remembered.

9. Genealogical Research Leads

Record sets to pursue (synthesized from all four models):

1920 U.S. Census — match family structure: parents in 50s-60s, 6 sons, 2 daughters in Ashe County, NC (all four models suggested census)

1910 U.S. Census — earlier household snapshot, children still at home (Claude, GPT)

WWI Draft Registration Cards — identify sons by name, age, and physical description (Claude, GPT, Grok)

Marriage records — when each child married and left (Claude, GPT)

Death certificates — parents’ death dates and final places of residence (Claude)

Land and tax records — identify the property if this is the family home (Claude, GPT)

Church records — membership rolls, baptisms (GPT)

Local newspapers — family reunion notices, anniversary portraits, obituaries (GPT)

State census records — additional household snapshots between federal censuses (Grok)

County histories — published family histories for Ashe County (Grok)

Unique strategies worth noting:

Check the back of the original print for names, dates, or a photographer’s mark — a simple step that’s often overlooked (GPT)

Use the family structure itself as a census search key — two parents with six adult sons and two daughters is a highly distinctive household signature that narrows search results dramatically (GPT, Gemini, Grok)

Compare this building’s architecture with other family photographs to identify the property (GPT)

Rescan the original at 600–1200 DPI for better facial detail and clothing analysis (GPT)

Raking light, UV, or infrared imaging on the original may reveal faded inscriptions, stamps, or photographer’s marks invisible under normal light (GPT)

10. Report & Catalog

Title: The Bower Family at Home, Ashe County, North Carolina, circa 1920

Ten members of the Bower family pose before a white clapboard building in the mountains of northwestern North Carolina, sometime around 1920. James Eli “Bawly” Bower, patriarch, sits at the left — his dark mustache and formal suit projecting the quiet authority of a man who has raised a family in the Blue Ridge. Beside him sits Emma Jane Bare Bower, his wife of more than two decades, in a dark high-necked dress with a bow at the throat. Behind them stand their six sons, shoulder to shoulder in dark suits, a generation of young men poised between the rural world that raised them and the industrial century pulling them toward Tennessee and Virginia. Two daughters sit at right — one in a lace-collared dress, the other in a white blouse with high button boots, her hairstyle trending toward the 1920s bob. A horizontal crack runs across the print at chest height, evidence that this photograph was folded — probably for mailing to a relative who had already left. The damage testifies not to neglect but to love: this image was kept close, carried, and handled for more than a century. It is both document and elegy: a family complete, captured in the last years before distance, marriage, and time began to separate them.

Catalog record:

Title: The Bower Family at Home, Ashe County, North Carolina

Date: c. 1918–1922

Creator: Unknown (local or itinerant photographer)

Type: Photograph

Format: Gelatin silver print (scanned reproduction)

Geographic Coverage: Ashe County, North Carolina

Subjects: James Eli “Bawly” Bower; Emma Jane Bare Bower; Bower family; family portraits; Appalachian families

Description: Formal outdoor family portrait of parents with six sons and two daughters posed before a white clapboard building. Print shows significant physical damage including a horizontal fold/crack.

Keywords: Bower, Bare, Ashe County, North Carolina, Appalachia, family portrait, Blue Ridge Mountains, gelatin silver, early twentieth century, rural America, genealogy

Alt-Text: Black-and-white family portrait of ten people — six young men standing in a back row, two older adults and two younger women seated in front — posed before a white clapboard building, circa 1920. A horizontal crack runs across the center of the print.

Quality Gate

Recreation test: A designer could recreate this image from the text above. The positions, clothing details (including the watch chain, lace collar, high button boots, and bow at the throat), building background, and spatial relationships are documented. The horizontal crack’s exact position and character are described. What remains difficult: precise facial features of individual sons, due to the softness of the image and the similarity of builds.

Uncertainties resolved by ground truth (Steve knows this family):

All four models said “family of 10: parents + 8 children” — Confirmed. James Eli “Bawly” Bower and Emma Jane Bare with their children.

All four said “rural United States” — Confirmed. But no model identified the specific region. The actual location is Ashe County, North Carolina, in the Blue Ridge Mountains. Claude guessed Australia/New Zealand. Grok guessed Midwest/Plains. Geographic identification from a portrait alone — without text, signage, or landscape — remains beyond current AI.

Claude’s date range of 1918–1922 — Plausible. George Cecil Bower was born in 1893 and would be 25–29 in this range, consistent with his apparent age in the back row.

All four said “gelatin silver print” — Confirmed, consistent with the period and setting.

Gemini said the second seated figure was a boy — Incorrect. She is one of the two daughters.

Next steps:

Cross-reference 1920 census for Bawly Bower, Jefferson, Ashe County

Match children’s ages to positions in the photograph

Check George Cecil’s 1918 WWI draft registration card for physical description

Examine original print verso for inscriptions

Digital restoration of the horizontal fold line

High-resolution rescan for clothing detail analysis

This combined analysis was synthesized from four independent AI model outputs by AI-Jane, March 20, 2026. No single model produced this document — it represents the best observations from Claude Opus 4.6, GPT-5.4, Gemini 3.1 Pro Preview, and Grok 4.20 Reasoning, integrated using the comparison matrix technique described in the main post.

For the full formatted version with tables and scoring matrices, see the companion PDF on GitHub.

Deep Look v2. By Steve Little. Creative Commons BY-NC.

I wonder if your family photo might have been taken as early as late 1917 or early 1918, the "event" being the likelihood that some or all of the sons would be going off to war soon. The U.S. officially entered the war on 17 Apr 1917. Just a thought . . . .